Here is our second article dedicated to our new reverse-proxy engine and its awesome features! After the Web Application Firewall, we now have a look to the HTTP cache built into our infrastructure.

What is an HTTP cache?

A good blog post is a post with a chart

We have tested our WordPress blog performances, using our new HTTP cache built in our proxy. Here is the result, which let us bet that may like this new feature:

There’s a considerable improvement of the number of requests handled by the proxy when we enable the Cache. When we only serve 15 req/s without it, it increases to 2604 req/s. A 173 factor, for the same frame. The response time is also improved, and fall to 0.38ms instead of 63.65ms approximately. It’s interesting for a feature effortless to use!

We made this benchmark using ApacheBench, by requesting the blog homepage. We run each shoot1) four times, with and without Cache enabled, before compiling the results. Our blog stands on a dedicated server, but we expect a similar rate for shared hosting instances. You can make the test yourself by connecting to your account using SSH and running the ab command using the same options on your website.

How does it work?

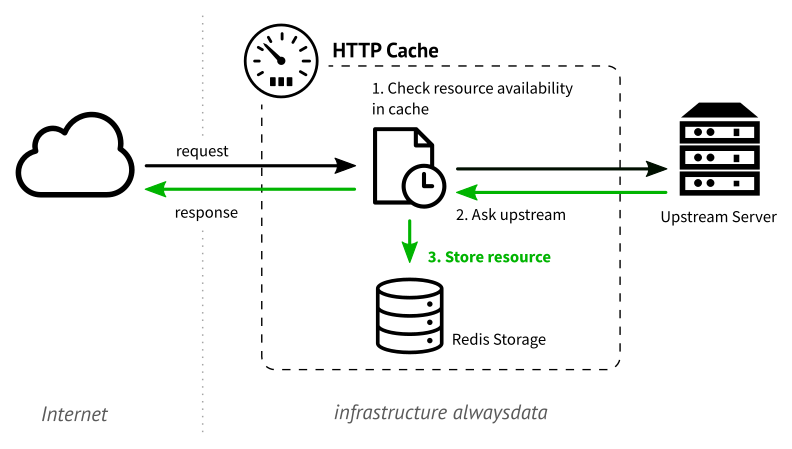

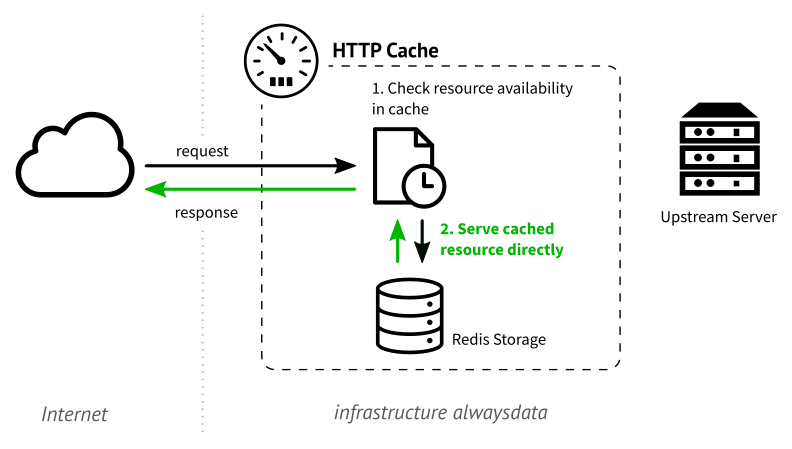

A Cache is a temporary storage which can serve the cached results when requested. An HTTP Cache is a cache which can store web pages and assets. It is primarily used to decrease the charge of an upstream server when it must serve an often requested page without any modification between two requests.

When a client requests a page to a web server, this one generates an HTML response and send it to the client over the network. Before the response goes outside of the infrastructure, the HTTP Cache handles it and stores it in its memory before to let it go.

When our proxy encounters the same request, it asks the Cache for an available version. If the page is available in the Cache memory, this one is served instead of asking the upstream server.

Use it at alwaysdata

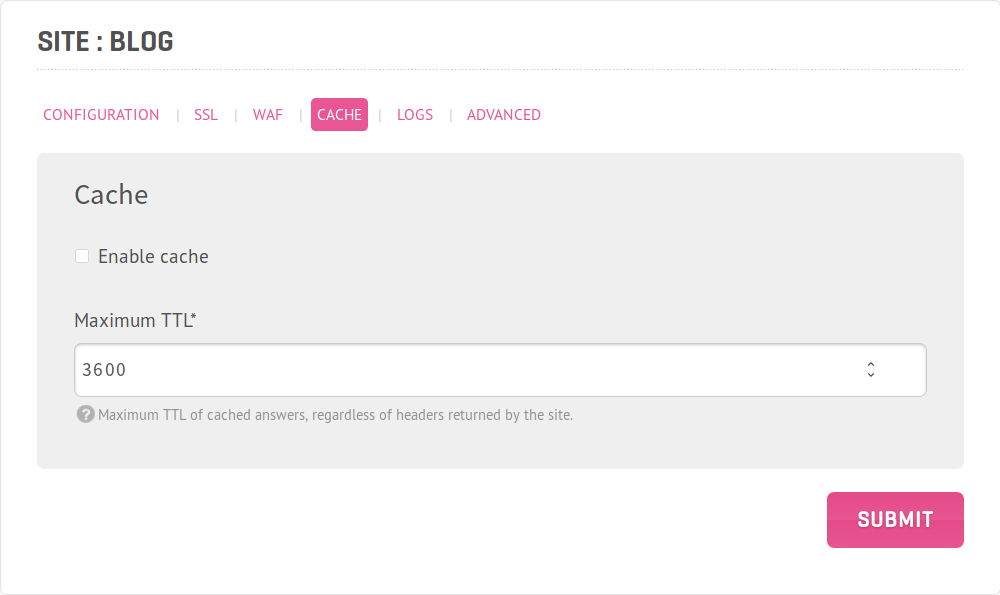

If you want to use the HTTP Cache, you can enable it for any site individually in the Sites → Edit → Cache section. Tick the Enable the cache option.

You must set the TTL for the pages served by this website. The TTL defines how many times the Cache retains the page before expiring it. You should choose it well. If we recommend a high TTL for a page that is not often modified, you must reduce it for highly dynamic content like a news website. If you set a too long duration, your visitors may see an expired page instead.

For instance, we need that any visitor sees a new homepage. When we publish a new article, the previous version of the homepage is then outdated. We then prefer to use a TTL between 5 and 10 seconds. This way, we ensure that we can use the high performances offered by the Cache with a relatively low risk of serving an old page.

This feature is currently in beta test and may evolves during the next weeks.

This feature need your application or website to be able to authorize the Cache to handle the requests. If resources aren’t explicitly marked as _cacheable_ by your app, there is a risk that our HTTP Cache can’t store them.

What’s behind the scene?

For technical people, here’s how we proceed to enable the cache. We chose to write with Python2) a module that follows the RFC 7234. A local Redis instance stores the cached resources. It allows us to manage the memory dedicated to the storage effortlessly.

We also chose to implement the HTTP PURGE verb. This method allows you to remove a cached version of a resource by calling it on its URL. Yan can then force to refresh the Cache easily.

After the performance, we made a significant effort on logging! In our next and last blog post, we introduce you to the new logging system that allows you to store custom formatted logs to allow you to debug your upstream applications effortlessly.

Hi,

Awesome! I just started to prepare my site to set caching headers to achieve this. I got a question though.

At the end of https://tools.ietf.org/html/rfc7234#section‑4.2.2 , there’s this:

“Note: Section 13.9 of [RFC2616] prohibited caches from calculating heuristic freshness for URIs with query components (i.e., those containing ‘?’…”

So my question is: are these pages with different GET parameters stored as different pages? Or all of these requests with the same path have one single cache entry?

Hello,

Pages with different GET parameters are indeed stored as different pages, as expected.

Hello,

Can we enable the cache for some URLs only?

Regards

@Pierre-Jean Buron: URLs are cached according to their headers, so your application can define what URLs will be cached (or not cached).

Don’t use ‘ab‘ for load testing. You’ll end up contending on open file handles and other system resources on the client instead of actually testing the server.

@Philip Tellis: ‘ab’ has flaws, but it’s perfectly capable of testing a server in most cases, and in particular to demonstrate a trivial x100 or x1000 performance gain.

It basically uses one open file handle per concurrent connection, we’ve used 10 concurrent connections in our example, and the default max open files on our servers is… 65536. I agree that the ‘ab’ can be the bottleneck, but not in such simple cases.